Running Tests

Once you have created your tests, retestr.ai offers multiple ways to execute them depending on your workflow.

Manual Execution

The simplest way to run tests is manually via the Dashboard. This is useful when you are developing new tests or debugging a specific issue.

- Project Level: Run all tests in a project.

- Suite Level: Run a specific group of tests.

- Test Level: Run a single test case for rapid iteration.

When starting a run, you can choose the Environment (e.g., Staging vs. Production) and the Branch to associate the results with.

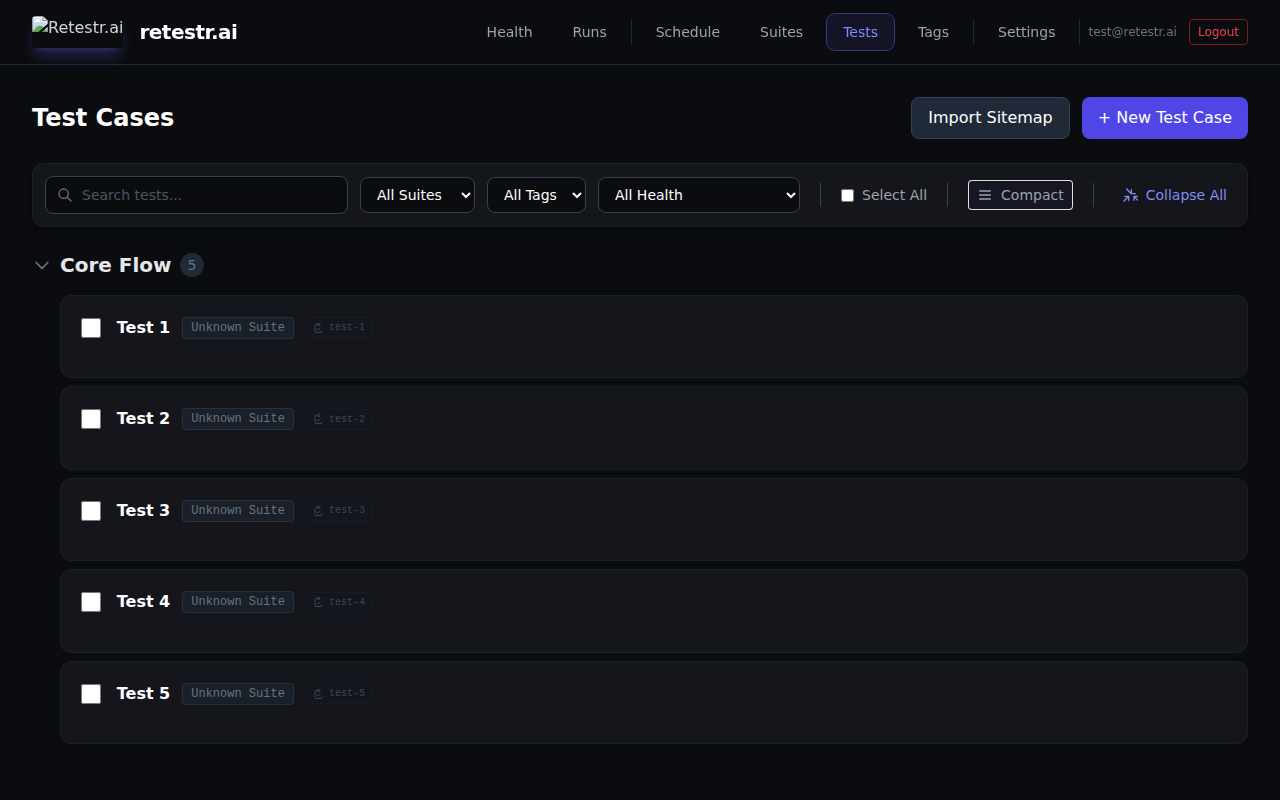

Dashboard Features

Manage your test suite efficiently with powerful organization tools.

Live Progress & ETA

When a test run is active, the dashboard displays a real-time progress bar. The Time Remaining estimate is calculated intelligently based on the historical average duration of the tests in the suite, helping you plan your time better.

- Dynamic Favicon: The browser tab icon updates in real-time (Spinner, Green Check, Red X) so you can monitor run status while working in other tabs.

- Tab Title Countdown: The active run's progress percentage and estimated time remaining are dynamically displayed in your browser's tab title (e.g.,

Run #50 (85%) 2m remaining...). This allows you to keep an eye on test execution while focusing on other tasks without switching tabs. - Test Complete Audio Notifications: Never miss a finished build. You can opt-in to hear a brief audio chime when a long-running background suite finishes. You can configure this in your user settings to play sounds on pass, fail, or both.

Search & Filtering

- Real-time Search: Filter tests by name or description.

- Filter by Suite/Tag: Drill down into specific areas of your app.

- Sparklines: View the duration history of each test inline to spot performance regressions or timeouts at a glance.

Command Palette

Press Cmd+K (or Ctrl+K) anywhere in the app to open the Command Palette. Quickly jump to a specific test, run, or settings page without lifting your hands from the keyboard.

Keyboard Shortcuts

Navigate your test list quickly without touching the mouse:

- J / K: Move selection Up / Down in the list.

- Enter: Open the selected test case for editing.

- ?: Open the full keyboard shortcuts cheat sheet.

Timestamps

Results are displayed with Relative Timestamps (e.g., "5 mins ago") for quick context. Hover over any timestamp to see the exact date and time.

Scheduled Runs

Set up Schedules to ensure your application is always healthy, even when you aren't deploying code.

- Hourly: Great for monitoring production uptime and critical flows.

- Daily: Common for full regression suites running overnight.

- Weekly: Useful for deep, long-running audits.

To configure a schedule, go to your Project Settings > Schedules.

CI/CD Integration

The most powerful way to use retestr.ai is integrating it into your deployment pipeline. This ensures that no visual regressions ever reach production.

See our CI/CD Guide for detailed instructions on triggering runs from GitHub Actions, GitLab CI, Jenkins, and more.

Bulk Actions

Need to manage large test suites efficiently? You can perform actions on multiple tests at once.

- Select tests using the checkboxes in the list (or Select All).

- A floating action bar will appear at the bottom of the screen.

Available Actions

- Run Selected: Trigger a batch job for the selected tests.

- Enable / Disable: Quickly toggle the active status of multiple tests.

- Add / Remove Tags: Apply tags like

#smokeor#criticalin bulk. - Delete: Remove multiple tests (use with caution).

Audit Mode (Broken Link Checker)

Sometimes you want to check for console errors or broken links without waiting for visual snapshots.

- How to trigger: Hold

Alt(Option on Mac) while clicking any Run button. - What it does: Runs the test script but skips screenshots. It only checks for:

- Console Errors (if configured).

- Network Failures (4xx/5xx).

- Script Exceptions.

This mode is significantly faster and uses fewer resources, making it perfect for quick sanity checks.

Dry Run Mode (Script Verification)

When writing or editing complex tests, you often need to verify that your Playwright script successfully navigates and finds selectors without creating visual noise or consuming visual check credits.

- How it works: Running a suite or test in "Dry Run" mode executes the Playwright script step-by-step but intentionally skips all

expect(page).toHaveScreenshot()assertions. - Use Case: Ideal for validating AI-generated self-healing selectors or confirming wait states before capturing formal baselines.

Handling Flakiness

Tests can sometimes fail due to network hiccups or dynamic content.

- Retry Failed: If a suite run has failures, you can click "Retry Failed" to re-run only the red tests.

- Smart Retries: Our runners automatically retry network glitches to minimize false alarms.

Stopping Runs (Global Panic Button)

If you accidentally trigger a massive test suite or a bad deployment causes thousands of failures, you can immediately halt all execution.

- Who can use it: Organization Admins (

ADMINrole). - Where to find it: On the Runs list page in the Dashboard, click the Stop All Active Runs button in the top right header.

- What it does: Immediately marks all

PENDINGandRUNNINGjobs in your organization asCANCELLED. The active runner processes will detect this status change within a few seconds and safely terminate their test execution.

Hammer Mode (Flakiness Repro Loop)

Intermittent failures ("flakes") are notoriously hard to debug. Hammer Mode helps you reproduce them by running a test repeatedly in a tight loop.

- Open the Run Details for a run where a test failed unexpectedly.

- Click the Hammer icon (or find it in the actions menu).

- Configure the number of repetitions (e.g., 50 times).

- Stop on Failure: Enable this to halt execution as soon as the test fails, preserving the exact state for debugging.

This generates a specialized test run that reports pass/fail statistics (e.g., "48 Pass / 2 Fail"), confirming if the test is truly flaky.

Automated Quarantine Workflow

To prevent flaky tests from blocking your deployments, retestr.ai includes an Automated Quarantine system.

- Trigger: If a test case flaps (Passes then Fails repeatedly) more than 3 times within a 24-hour window, it is automatically marked as Quarantined.

- Effect: Quarantined tests still run, but their failures do not fail the build. They are marked as "Unstable" in the UI.

- Notification: The test owner is notified via email/Slack when a test enters quarantine.

- Resolution: You must manually un-quarantine the test (via the Test Settings) after fixing the underlying issue.

Test Results

When viewing test results, you get detailed metadata to help with debugging:

- Environment Badges: See exactly which browser (Chrome/Firefox/WebKit), OS (Linux/Windows/Mac), and viewport size was used for the run.

- Quick Rerun: Hover over any test result card to see a "Play" button for instantly re-running just that single test case.

Artifact Downloads

For deep debugging or offline analysis, you can download all artifacts generated during a run.

- Open the Run Details page.

- Click the Download Artifacts button (usually in the header actions).

- This generates and downloads a structured ZIP file containing:

screenshots/(Actual, Baseline, Diff images).traces/(Playwright trace files for use withplaywright show-trace).logs/(Console logs and network HAR files).