Creating Tests

retestr.ai gives you flexibility in how you create tests, from no-code recording to full-code customization.

Hierarchy

To keep things organized, we use a simple hierarchy:

- Projects: Your high-level application (e.g., "Customer Portal").

- Suites: Groupings of related tests (e.g., "Login Flow", "Checkout").

- Test Cases: The actual scenarios being tested.

Magic Recorder

The Magic Recorder allows you to record a browser session and automatically convert your actions into a stable Playwright script.

- Click Create Test.

- Enter the starting URL of your application.

- A remote browser window will open in your dashboard.

- Interact with your site normally (Click, Type, Scroll).

- The recorder captures every action and generates code in real-time.

- Click Save to create the test case.

Best For: Most user flows, happy paths, and quick regression coverage.

Note: The Magic Recorder uses

playwright codegenrunning on our secure cloud servers.

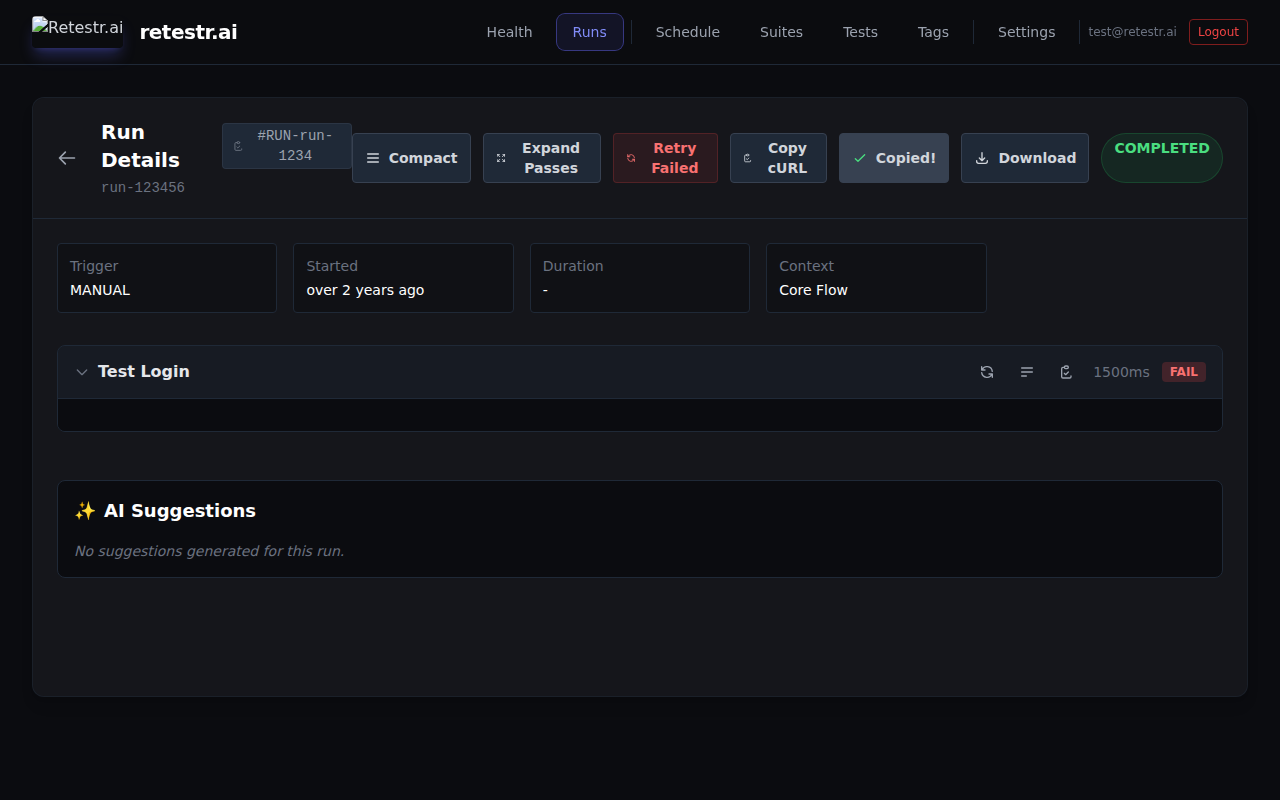

AI-Powered Suggestions

If you have an existing test case but want to improve coverage, you can use our Generative AI engine to suggest variations.

- Open an existing Test Case.

- Scroll down to the AI Suggestions panel.

- Click Generate New to have the AI analyze your current test script and execution history (including screenshots) to propose relevant variations.

- Optional: You can provide a custom prompt (e.g., "Add a negative test case for invalid password").

- Review the suggested code and click Accept to instantly create a new test case.

Best For: expanding test coverage, finding edge cases, and generating negative tests without writing code from scratch.

Sitemap Import

Rapidly bootstrap your test suite by importing URLs directly from your sitemap.xml.

- Navigate to Suites view.

- Click the Import from Sitemap button (top right).

- Enter your sitemap URL (e.g.,

https://example.com/sitemap.xml). - Select the target Project and Suite (or create new ones).

- retestr.ai will parse the XML and automatically create a

TestCasefor each URL found.

Best For: Static sites, marketing pages, and ensuring 100% route coverage instantly.

Code Editor (Advanced)

For scenarios that require complex logic, we provide a powerful Code Editor built directly into the dashboard.

Why use the Code Editor?

While the Magic Recorder is great for simple flows, sometimes you need more control:

- Dynamic Data: You need to generate a random email address for sign-up testing.

- Complex Waits: You need to wait for a specific API response or animation to finish before taking a screenshot.

- Custom Selectors: You want to target a specific element using a robust

data-testidattribute. - Conditional Logic: You need to handle A/B tests or feature flags (e.g., "If pop-up appears, close it").

Features

- Standard Playwright: We use standard Playwright syntax, so if you know JavaScript/TypeScript, you already know how to use it.

- Monaco Editor: Built-in VS Code-like editor with syntax highlighting and auto-completion.

- Snippet Library: Access a library of reusable code blocks (e.g., "Login", "Clear Cookies") shared across your team. Click a snippet in the sidebar to insert it at your cursor.

- Test Cloning: Quickly duplicate an existing test case to modify it for a new scenario.

- Markdown Descriptions: Use Markdown to document your tests with links, lists, and formatting.

- Version History: Made a mistake? Click the History button in the editor toolbar to view past versions of your script. You can see when each version was saved and Restore it with a single click.

AI Self-Healing

retestr.ai uses an advanced AI Self-Healing Engine to automatically repair broken tests at runtime.

How it works

- Failure Detection: If a

click,fill, orlocatoraction fails (e.g., "Element not found"), the runner catches the error. - Visual Analysis: It captures a screenshot of the current page state and sends it to the Self-Healing Engine.

- Heal: The AI analyzes the visual context to identify the intended element and generates a new robust selector (e.g., finding the "Submit" button even if its ID changed).

- Auto-Retry: The runner retries the action with the new selector.

- Report: If successful, the test continues to pass, and a Healing Event is recorded in the test results, allowing you to update your script permanently.

3D & WebGL Testing

Testing 3D applications (Three.js, WebGL) is historically difficult because DOM selectors don't work inside the canvas. retestr.ai solves this with deep Scene Graph Integration.

Scene Object Assertion

You can assert the existence of 3D objects by name, even if they aren't in the DOM:

// Assert that the 'RedCube' mesh exists in the Three.js scene

await expect(page).toHaveThreeObject('RedCube');

3D Self-Healing

If a 3D object is renamed or moved in the scene graph (e.g., developers renamed HeroCharacter to HeroCharacter_v2), the test won't fail.

- The runner detects the missing object.

- It captures the full Scene Graph state.

- It uses fuzzy matching and structural analysis to find the object under its new name.

- It "heals" the test and reports the change.

Manual Scene Capture

You can also manually capture the state of the 3D scene at any point for debugging:

// Save 'scene-graph.json' to artifacts

await page.evaluate(() => retestr.captureSceneGraph('checkpoint-1'));

Handling Noise & Dynamic Content

Visual tests often fail because of dynamic data (timestamps, ads, rotating carousels) or rendering noise. retestr.ai provides multiple layers of Noise Suppression to ensure stability.

1. Smart Auto-Masking

When enabled in the Test Settings, the system uses heuristics to automatically detect and hide common dynamic elements before taking a screenshot.

- UUIDs (e.g.,

123e4567-e89b...) - Dates (e.g.,

2023-10-27,Oct 27, 2023) - Relative Times (e.g.,

5 mins ago) - Emails (e.g.,

user@example.com)

The masked elements appear as magenta boxes in the screenshot.

2. Visual Masking Editor

For specific regions that are always dynamic (e.g., a "Recommended for You" widget), you can manually draw masks on the baseline.

- Open a failed test run or an existing Baseline.

- Click the Edit Masks button in the diff viewer.

- Draw rectangles over the areas to ignore.

- Click Save. These regions will be ignored in all future runs.

3. Global Masks

If you have elements that appear on every page (e.g., a cookie banner or a live chat widget), define a Global Mask.

- Go to Project Settings -> Global Masks.

- Add a CSS selector (e.g.,

#intercom-container) or a name. - This selector will be hidden/masked across your entire test suite.

Advanced Configuration

Auto-Wait & Quiescence (Advanced)

To reduce flakiness caused by animations or ongoing network requests, you can configure the runner to wait for a "Quiescent" state before taking a screenshot.

This is currently configured via the quiescenceSettings JSON field in the Test Case or API.

{

"quiescenceSettings": {

"networkIdle": true,

"cssAnimations": true,

"timeout": 5000

}

}

- networkIdle: Waits for the network to be idle (no active requests for at least 500ms).

- cssAnimations: Waits for all CSS animations on the page to complete.

- timeout: Max time to wait (in ms) before proceeding anyway.

Smart Auto-Retries

Network glitches or temporary API failures can cause flaky tests. Instead of a simple retry loop, Retestr supports Exponential Backoff to handle these transient issues intelligently.

Configure this in the Retry Strategy section of the test editor:

- Max Retries: How many times to retry the test before failing (e.g., 3).

- Initial Delay: How long to wait before the first retry (e.g., 1000ms).

- Backoff Factor: Multiplier for the delay after each attempt (e.g., 2.0).

Example:

With Initial Delay 1000ms and Factor 2.0:

- Test Fails -> Wait 1s -> Retry 1

- Retry 1 Fails -> Wait 2s -> Retry 2

- Retry 2 Fails -> Wait 4s -> Retry 3

Responsive Breakpoint Matrix

Automatically run the same test across multiple viewports (Mobile, Tablet, Desktop) to catch responsive layout issues.

- Configure in the Viewports section of the test editor.

- Select from presets (iPhone, Pixel, iPad) or define custom dimensions.

Soft Assertions

Use expect.soft(...) to allow the test to continue even if an assertion fails. This lets you capture multiple visual regressions in a single run.

Dry Run (Script Check) Mode

When creating or editing complex Playwright tests, you may want to verify that your script successfully navigates and finds selectors without generating noise or using your Visual Check credits.

- Configure

mode: 'dry-run'in the API or trigger a "Dry Run" from the test editor UI. - The runner will execute the Playwright script step-by-step to verify logic but will intentionally bypass all

expect(page).toHaveScreenshot()assertions.

Performance Budget Guardrails

Visual regressions often come with performance regressions (e.g., a new unoptimized image). You can define strict performance budgets in your test configuration:

- LCP (Largest Contentful Paint): Fail if loading takes longer than X ms (e.g.,

2000). - CLS (Cumulative Layout Shift): Fail if the layout shifts more than X (e.g.,

0.1). - FPS (Frames Per Second): Fail if the animation smoothness drops below X (e.g.,

30).

If the recorded trace violates these thresholds, the test will fail even if the screenshot matches.

Strict Guardrails

Sometimes visual correctness isn't enough. You might want to fail the test if there are underlying issues that don't show up on screen.

Console Guardrails

By default, console errors (like TypeError: Cannot read property of undefined) are logged but don't fail the test.

- Enable Fail on Console Error in the Test Settings to enforce zero-tolerance for JS errors.

- You can add Ignore Patterns (Regex supported) to whitelist benign errors (e.g.,

.*analytics.*).

Network Guardrails

Ensure your API is healthy.

- Enable Fail on Network Error to fail the test if any network request returns a 4xx or 5xx status code.

- Use Ignore Patterns (Regex) to skip known issues (e.g.,

.*\/tracking\/pixel.*).

Custom Metrics

Go beyond visual diffs by tracking custom numeric values (e.g., memory usage, load times, frame rates).

// Report a custom metric

window.retestr.reportMetric('Memory Usage (MB)', 128);

window.retestr.reportMetric('FPS', 60);

These metrics are collected during the run and displayed in the Custom Metrics card in the run results.

Organizing Tests

Environments

Define Environments (e.g., Staging, Production) in your project settings. This allows you to write one test script and run it against multiple URLs.

Read the full Environments guide to learn how to configure base URLs and variables.

Tags

Add Tags (e.g., #smoke, #mobile, #nightly) to categorize your tests. You can then trigger test runs that only target specific tags.

Tag Manager: Navigate to the Tags page in the dashboard to manage your project's tags.

- Create: Add new tags with custom colors to visually distinguish them.

- Delete: Remove unused tags from the project.

- Visualize: See how many tests are assigned to each tag.

Test Dependencies

You can define dependencies between tests to ensure they run in a specific order.

- In the Test Settings, use the Dependencies section to select tests that must pass before the current test runs.

- If a dependency fails, the dependent test will be skipped, saving time and resources.

Bulk Test Operations

Manage large test suites efficiently by selecting multiple tests from the list view. A floating action bar will appear, allowing you to:

- Run Selected: Trigger a job for all selected tests (Standard or Audit Mode).

- Enable / Disable: Toggle the enabled status of multiple tests at once.

- Add / Remove Tags: Bulk apply or remove tags like

#regressionor#smoke. - Delete: Permanently delete multiple tests.

Test Maintenance

Test Case Expiration

For temporary tests (e.g., Black Friday banners, holiday promotions) that should only run for a limited time, you can set an Expiration Date.

- In the Test Settings, verify the Expires At field.

- Once the date passes, the test case is automatically disabled by the system.

- This prevents "Zombie Tests" from failing your build after a campaign ends.